DataCenter Knowledge recently published an article exploring the increase of machine learning in data center cooling automation practices stating that “Many enterprises routinely over-cool their data centers with the goal of preventing failure. Not only is this blunt-force approach extremely inefficient, it doesn’t guarantee that none of the IT equipment will overheat.”

DataCenter Knowledge recently published an article exploring the increase of machine learning in data center cooling automation practices stating that “Many enterprises routinely over-cool their data centers with the goal of preventing failure. Not only is this blunt-force approach extremely inefficient, it doesn’t guarantee that none of the IT equipment will overheat.”

Vigilent president and CTO Cliff Federspiel was featured in the article:

A misconfigured or failed cooling unit, for example, would be shown on the map as having little or no influence, Cliff Federspiel, Vigilent president and CTO, explained to us. The results are often counterintuitive, which is an area where ML is especially effective, since it doesn’t rely on intuition like humans do. A data center technician may hear a cooling unit run, see it vibrate, feel a cool air stream coming out of it, and conclude that it’s performing as expected. But the influence map may show that it’s making no contribution to cooling the IT gear inside the facility.

“You can also figure out which cooling units are super important,” so you can pay special attention to them, Federspiel said. “Because if it fails, it will have a bigger effect on the temperature of the floor than if another were to fail.” In other cases the data may show that there’s enough cooling redundancy for most of the room, while one local spot only relies on a single unit. That spot may house some critical equipment that would fail if that one unit fails, wreaking havoc for the organization.

Misdirected Air

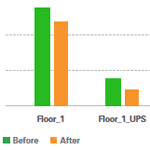

The influence map can also uncover more fundamental airflow issues, such as badly placed perforated tiles. Such basic issues are more widespread than one might assume. “Almost every site we go into that has a raised floor or ducted airflow, the air delivery is really not very effective,” Federspiel said. “The visualizations we have are very effective at helping get manual air delivery back to where it needs to be.”The rise of free cooling (which is now mandated for new US data centers) brings its own problems, especially if summer temperatures require a combination of free and mechanical cooling, which is difficult to configure correctly and prone to failure. “More than half the time customers are not getting their money’s worth for their free cooling,” Federspiel said.

Vigilent also uses machine learning to make daily operations more efficient by running “what-if” scenarios and turning cooling units on and off briefly to discover optimization opportunities. “A lot of these buildings are designed to be highly redundant and have extra capacity, but they’re not designed with the instrumentation and telemetry to know how much extra capacity there is,” he said. In a use case similar to Google’s, the software can change temperature setpoints, slow fans down, or turn cooling units off entirely to save energy, delivering only the amount of cooling the current IT workload needs – and it retrains periodically to stay up to date.

If the software indicates significant overcapacity, data center operators can add more servers and racks without adding more cooling or repurpose existing cooling equipment. It’s also possible to use Vigilent to move workloads around the data center to take full advantage of the available cooling capacity, Federspiel said.